6 Best Microservice Testing Strategies to Follow in Different Architecture

Today, there is no dearth of information related to microservices testing on the web about what they are and how organizations are transforming their development architecture. However, very few articles talk about the test strategies that must be followed while testing microservice architectural solutions and applications. This blog aims to help you provide the information.

Before I continue to the Strategy, Test Areas, Test Types, etc., let us understand some of the definitions of microservices.

A microservice is a software development technique—a variant of the service–oriented architecture (SOA) architectural style that structures an application as a collection of loosely–coupled services. In a microservices architecture, services are fine-grained, and the protocols are lightweight.

A microservice architecture builds software as a suite of collaborating services. Microservices are often integrated using REST over HTTP. They connect over networks and make use of “external” data stores.

Microservice architecture, or simply microservices, is a distinctive method of developing software systems that focuses on building single-function or single–purpose modules with well-defined interfaces and operations. This trend has grown popular recently as enterprises look to become more Agile and move towards a DevOps and continuous testing approach. Microservices can help create scalable, testable software that can be delivered very often, as frequently as weekly and daily.

Microservices architecture allows admins or users to load just the required services, improving deployment times, especially when packaged in containers.

Microservices provide changes only when and where they are needed. With a microservices architecture, the application monitors each functional component.

Why Do You Need a Special Strategy to Test Microservices?

It would help if you had a different microservices testing strategy. They follow a different architecture and have many integrations with other microservices within one’s organization and from the outside world (3rd party integrations). Additionally, these require much collaboration among different teams/squads developing individual microservices. Moreover, they are single-purpose services and are deployed independently and regularly.

The Microservices Testing Strategies

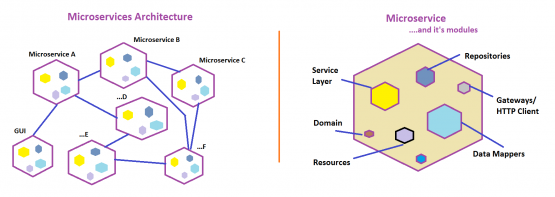

Before discussing the various microservices testing strategies, let us look at a self-explanatory diagram to understand the composition of microservices.

Different types of testing need to be performed at different layers of microservices. Let us look at them using an illustration of three microservices collaborating/working closely. Assume Microservices A, B, and C are working together with many other Microservices (not shown in the picture). The following diagram illustrates all testing types and their boundaries by taking one microservice (Microservice B) as an example.

Unit Testing

A unit test exercises the smallest piece of testable software in the application to determine whether it behaves as expected.

Unit tests are typically written at the class level or around a small group of related classes. The smaller the unit under test, the easier it is to express the behavior using a unit test. With unit testing, you see an essential distinction based on whether or not the unit under test is isolated from its collaborators.

In connection with the images above, unit tests are crafted explicitly for the internal modules within a given microservice, addressing each internal module individually. Techniques such as employing test doubles, stubs, and mocking are often utilized to substitute dependencies where necessary. Typically, unit tests are authored by your development team. In your capacity as a QA engineer, it is essential to ensure that unit tests are included as an integral part of their code commits.

Integration Testing

Unit testing alone does not provide a guarantee about the behavior of the system. You must address the coverage of the internal modules when they work together to form a complete Microservice. You must also manage the interactions the modules make with remote dependencies.

An integration test verifies the communication paths and interactions between the modules and their dependencies.

Integration tests collect modules and test them as a subsystem to verify that they collaborate as intended to achieve some larger behavior. Examples of module integration tests demonstrate the communication between Repositories and service Layer, Service Layer and resources, etc. Integration test examples for external dependencies include the tests between data stores, caches, and other microservices.

You often write an extended version of your unit tests on the internal modules and start calling them Integration Tests. Thus, it is a good idea to delegate the job of writing automated integration tests to your developers because it is easy for them to use the existing unit test frameworks to extend for Integration Tests. It needs them to use the actual instances of the internal modules involved (Ex: API Resources, Service Layer, DBs, and Message Queues, etc.). Testers can choose to do this activity provided the infrastructure team is ready to give you an integration environment with all the actual dependencies deployed.

Write just a handful of test cases in both cases; do not automate an exhaustive list, as other testing types will cover the complete functional testing (Component Tests). These tests should act like a health check activity between the integration layers with a small test suite size.

Component Testing Microservices

With Unit and Integration Testing, you can gain confidence in the quality of the internal modules that make up the microservice. However, you cannot guarantee that the microservice works together to satisfy the business requirements. You must end-to-end test the integrated microservice by isolating it from external dependencies and other collaborating microservices.

A component test verifies the end-to-end functionality of the given microservice in isolation by replacing its dependencies with test doubles and mock services.

In a microservice architecture, the components are the services themselves. By writing tests at this granularity, the contract of the API is driven through tests from a consumer’s perspective. Isolation of the service is achieved by replacing external collaborators with test doubles and using internal API endpoints to probe or configure the service.

The above microservice diagram shows that component tests focus on one microservice at a time. For example, testing Microservice A in isolation, and then B, and then C, and so on. If Microservice A collaborates closely with Microservice B and you want to test Microservice A in isolation, then you would replace Microservice B with a mock service, integrate it with Microservice A, and try it end-to-end.

Testing such components in isolation provides many benefits:

- Limiting the scope to a single component makes it possible to thoroughly perform an acceptance test for the behaviour encapsulated by that component while maintaining tests to execute more quickly.

- Isolating the component from its peers using test doubles/mocks avoids any complex behavior they may have. It also helps to provide a controlled testing environment for the component, triggering any applicable error cases in a repeatable manner.

QA team is expected to define the coverage, write test scenarios, and automate them all. You can use standard API testing tools such as SoapUI, ReadyAPI, REST-assured, and HTTP Client. You can see this as a typical web service testing. This involves extensive mock services to replace the dependencies to test microservices in isolation. Wiremock, MockLab, MockableIO, and ServiceV are a few examples.

Contract Testing

With Unit, Integration, and Component Testing Microservices, you can achieve high coverage of modules that make up the microservice. However, the quality of the complete solution is not assured unless your tests cover all the microservices working together. Contract Testing of external dependencies and end-to-end testing of the whole system help provide this.

A contract Test is a test at the boundary of an external service verifying that it meets the contract expected by the consuming service.

If two microservices or a microservice and its dependent services are collaborating, then there forms a contract between them. Suppose Microservice A and B are collaborating where Service A interacts with Service B by sending/seeking some data and Service B is responding to Service A for all its incoming requests. In that case, there forms a contract between Service A and B. Here, Service A is known as the consumer, and Service B as a provider.

Contract testing takes place in two steps:

- First, the consumer will publish a contract for its provider. This contract looks like a typical API schema (can be a json file) with all the possible requests, response data and formats. This includes headers, body, status codes, Uri, path, verb, etc. At the end of first step, the contracts are published to the provider directly or to a central location.

- Second step is where the provider accesses the contracts given by its consumer(s) and verifies them against their latest code. If they find any failures, then it could be due to some breaking changes the provider has made to their own API schema

This way, the provider team can keep all their consumers (different groups within their organization or other clients/customers) updated on the schema changes. This will help the consumer plan the change management well ahead.

QA teams from both parties are expected to produce contract tests. Contract testing also employs an interim mock service as a bridge between the consumer and provider since the testing takes two steps in different timelines. PACT is a great example of implementing contract testing.

Contract tests are not functional tests. They are defined for the entire microservice and focus more on input/output formats and types than actual values. Hence, the suite size is expected to be smaller than the component test suites.

End-to-end Testing

The system as a whole meets business goals irrespective of the component architecture in use. The whole system should be treated as a black box, and the tests must be exercised as much on the fully deployed system as possible, manipulating it through public interfaces such as GUIs and service APIs. Strictly, no concept of mocking is observed here.

As a microservice architecture includes more moving parts for the same behavior, end-to-end tests provide value by adding coverage of the gaps between the services. You do not have to write tests for each of the microservices involved. You just need to find a few high–level business workflows or user journeys (end-to-end journeys) and automate them. Any UI and API automation library can be used. Selenium, in combination with REST-assured, is a best example test framework.

Writing and maintaining end-to-end tests can be very difficult, write as few end-to-end tests as possible.

Performance Testing

While the importance of performance testing is widely known, it is worth mentioning at what level you perform this for microservices. It is recommended that you do it at two levels:

- Microservice level (for each microservice when it is deployed)

- System level (when all microservices are deployed to work together)

It is better to have separate performance environments to do this. QA team can use any lightweight tool, such as JMeter and Locust, to measure the performance of each microservice. I recommend approaching specialized performance teams to do a detailed performance analysis of the entire system when all the microservices are deployed and integrated to work together.

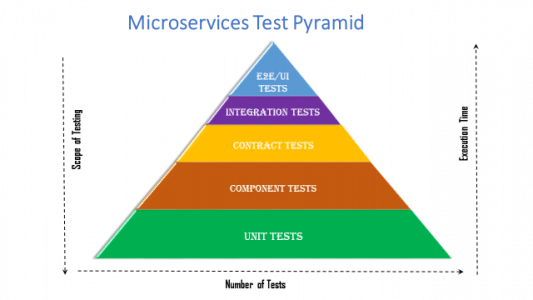

Microservices Test Pyramid

Unit Tests are at the bottom of the pyramid with comparatively larger test suites in size. They are written for each microservice and all of their internal modules/components. The execution time is considered very low, but the scope of testing is higher with granular coverage.

Component Tests are second to Unit Tests in size as this is nothing but the full–functional test suite for all microservices. You will cover the tests for all possible cases, boundary values, edge cases, positive and negative cases, etc.

Contract Test suites have a considerably good size with a look and feel similar to component test suites, but the coverage is limited just to the contracts, which means the input and output formats and types. You do not have to test the functionality. Example tests for an API would be a test for 200 responses, another for 400 responses, and one for 415 responses if applicable for each resource URI/endpoint.

Integration Tests are usually only a handful by, writing 2 or 3 tests for each integration among the internal modules and external APIs. The size is considerably small.

End-to-end Tests are minimal–sized suites with test scenarios concentrating on the major workflows and user journeys. The execution time is high as it may involve GUIs, but the scope is limited.

Conclusion

With the understanding of what microservices are, how to test them, what testing types to incorporate, and where to execute the tests w.r.t the environments, you are good to go with proposing the best test plan and microservices testing strategy that fits your organizational/client needs. But this is subject to customizations and modifications based on many factors such as the cost involved in implementing all the testing types, resource capacity and availability, time and project delivery time scales, cost of test automation engineers, infrastructure, and hardware (e.g.: cloud), budget, and many more.

In the next part of this blog, you will understand when to run different test suites in the CI & CD using the Quality Gates model.

Leave a Reply